New Controversial Windsurf Pricing Model

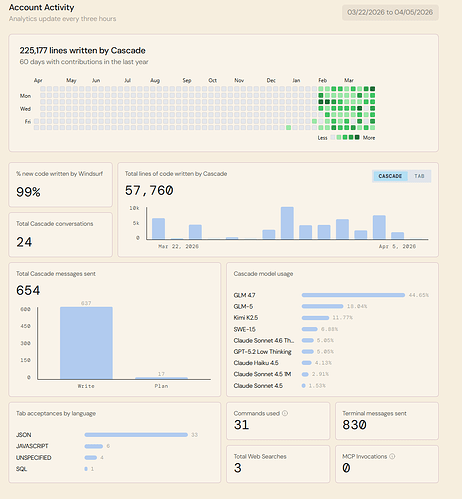

The pricing model of windsurf this week has caused a stir… we’re all sitting with baited breath to see how this pans out, hopefully in our favour.

So now there is Pro ($20) and Max($200) (as well as team plans)… see Pricing | Windsurf for feature breakdown at bottom of page

Here is an estimate of the number of messages that you may expect to see:

| Pro and Teams | Max | |

|---|---|---|

| Premium Plus (Opus 4.6, GPT-5.4, GPT-5.3-Codex) | 7-27 messages / day | 42-170 messages / day |

| Premium (e.g. Sonnet 4.6, GPT-5.2, Gemini Pro) | 8-101 messages / day | 47-631 messages / day |

| Lightweight (e.g. Haiku, Flash) | 47-190 messages / day | 291-1,190 messages / day |

The usage limits assume a daily window. An additional weekly limit applies. The estimates are based on current usage patterns.

AI and BDD

It’s actually super hard for AI to work in BDD mode, and create specs BEFORE creating code, as it has to do the reasoning at the same time, and you may as well code out some of it for free while you are there, so that’s been a learning experience also working that balance out.

I have a daily and weekly quota and can buy extra credits if I go over, not sure what i think about that, I would have preferred a weekly/monthly quota, as some days I use it some days I dont, so will now need to create a bot that loads up a workflow of tasks, with testing to see how we go.

I’m a pretty heavy user at the moment as I’m rearchitecting a lot of Sql code to 2025 and updating my components to the next gen so been using claude (sonnet/opus) a LOT but the over-engineering causes you to put SOOOO many guiderails around it.

GLM 4.7 and GLM5 in beta on windsurf

So now that Glm 4.5 and 5 are in beta on windsurf and dirt cheap to use, could be good to see what I can get out of them this week.

Will put both in the ‘arena’ (another windsurf feature to compare two ai’s doing the same task to see the quality of output) to compare their output a few times also … so it’s going to be an exiting week as GLM5 is meant to be killer!

Winsurf Blog on new pricing model

Introducing our new Windsurf pricing plans

What’s changing:

- No more credits. Your Free, Pro, or Teams plan includes a usage allowance that refreshes automatically on a daily and weekly basis. For the majority of users, this quota will be enough to fully cover all agent usage.

- For paid plans, if you go beyond your included usage, you can purchase extra usage which will be consumed at API pricing.

The CASCADE and PLAN modes are incredibkle on this IDE, makes copilot look like a toy. (winsurf is built on Monaco of course like vscode so you can add in cfml extensions also)

Also the workflows, skills, rules and memories features are a lifechanger - moved totally away from instruction files now. I’ll post again about that once a get a full handle on how to configure the combo best.

And with Glm 4.7 at 0.25 credits (INSANE) and 5.0 at 1.25 credits thats insane value and abut 12 times cheaper than Claude atm…

Looking to self host the GLM 4.7 32B soon locally, that will be incredible…

ATM am using the SWE 1.5 for free on Windsurf also for everyday tasks, but it’s VERY simple,

If you want to check it out and get some freebies use this link : Get $10 in extra credits for Pro