TL;DR, I lost my mail queue when running Lucee in docker containers.

When you think about state persistence in Lucee, a couple of obvious things come to mind:

- DB data

- Lucee config files (

lucee-web.xml, lucee-server.xml, and scheduler.xml)

That second bullet becomes important especially when you start using docker (or use other short-lived kinds of instances).

You might also realize that session persistence is important depending on your load balancing strategy, etc.

However, there’s another bit of state that I only started thinking about after it bit me:

Lucee also manages “tasks.” I think those consist of the email queue and threads. (Maybe there’s more.)

Lucee keeps that information in the filesystem. In the official docker image, that path is (the confusingly named) /opt/lucee/web/remote-client.

If there are outgoing emails queued up and added to the file system, when a container is recreated, those emails are lost.

Options:

However, there may be a problem with that second option: If multiple Lucee containers run on a given node, was Lucee built to handle shared access to the remote-client directory? If not, doesn’t this corner case prevent multiple containers Lucee from running on a given docker node?

That’s just email, I haven’t really thought through the other “task” features. (I’m not sure I know what they all are.)

Good thoughts. The config issue is handled by using something like CFConfig to manage your configuration. The session storage is handled with an external session store. That’s how Ortus handles it at least. We handle E-mails by using an external service called Postmark which we configured via the ColdBox Mail Services module to send all of our messages. I have discussed the issue of task storage in general (which sending E-mails uses) with Micha internally and I think there should be a couple of things out of the box in this regard:

- Scheduled tasks should have ability to use external storage such as DB. Adobe CF does this

- Tasks should have ability to use external storage such as DB

I didn’t look to see if there are tickets for either of those, but if not we should create them.

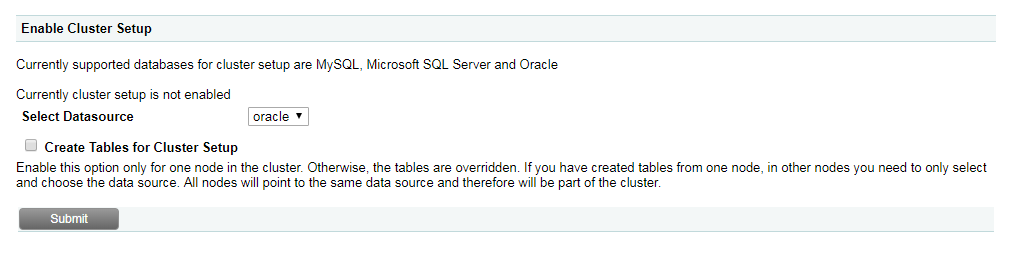

The way we do it, is that our sessions are clustered stored in a DB so that all the front facing dockers act normally.

To ‘send email’ we have a CFC that writes the generated content to a database table where we then have a traditional style servers polling this to handle the sending the actual email.

In a similar vein we have a traditional style server which purely handles scheduled tasks.

At some point we will probably move the scheduled tasks to a kubernetes style cron job that will start container at will to process the job.

The only real thing left to deal with is caching; currently any caching is done in memory within the docker container, which mostly defeats the point. So at some point we will probably move that to a redis setup.

- Scheduled tasks should have ability to use external storage such as DB. Adobe CF does this

Could you elaborate on this? FWIW, I’d been planning to pull the scheduled tasks out of my main app and add them to a single-purpose little Lucee container that only handles scheduled tasks.

- Tasks should have ability to use external storage such as DB

I didn’t look to see if there are tickets for either of those, but if not we should create them.

I’ve created a ticket for this one: [LDEV-2030] - Lucee

I can’t elaborate too much since I’ve never used Adobe’s clustered task functionality. (We schedule our small amount of tasks through a Jenkins job that just hits our swarm on whatever container is up at the time. ) My understanding however is that Adobe ColdFusion’s scheduled task engine they use lets you connect it to a DB and it uses a DB table to coordinate scheduling of tasks so any server can run them. That’s how I understand it to work at least.